- 日本語

- EN

SQUEEZE MUSIC Project

- ROLE

- Concept, Engineering

- DELIVERABLE

- Installation

- DATE

- Aug 2015

SQUEEZE MUSIC is a jukebox juice maker that automatically analyzes songs to make a cocktail based on the mood of the song chosen. Our roles in this project was the planning, technical direction, and software development.

Hack music with technology

In 2015, we joined the hackathon “Music Hack Day Tokyo” with some talented friends and we made a prototype in one day. The theme was “hack music with technology”. We came up with the idea to “taste the music” while we were eating ramen. In 2016, we updated and improved the prototype since the idea turned out successful.

Let’s drink music!

SQUEEZE MUSIC is the world's first jukebox that makes it possible to “taste” music. SQUEEZE MUSIC is a jukebox juicer that automatically analyzes songs to mix drinks according to the mood of the song chosen. The idea behind this concept is the “extension of the music experience”. So far, music influences visual and auditory senses only but SQUEEZE MUSIC managed to influence the sense of taste. But not only juice, your favourite music and coffee made by SQUEEZE MUSIC can wake you up in the morning. Music can also be played by musicians during a concert to make cocktails. SQUEEZE MUSIC has many applications, like offering music to drink to an hearing-impaired person. Musicians can express their music without language. SQUEEZE MUSIC enables the music experience to be more universal and nonverbal.

Music moods are converted into tastes

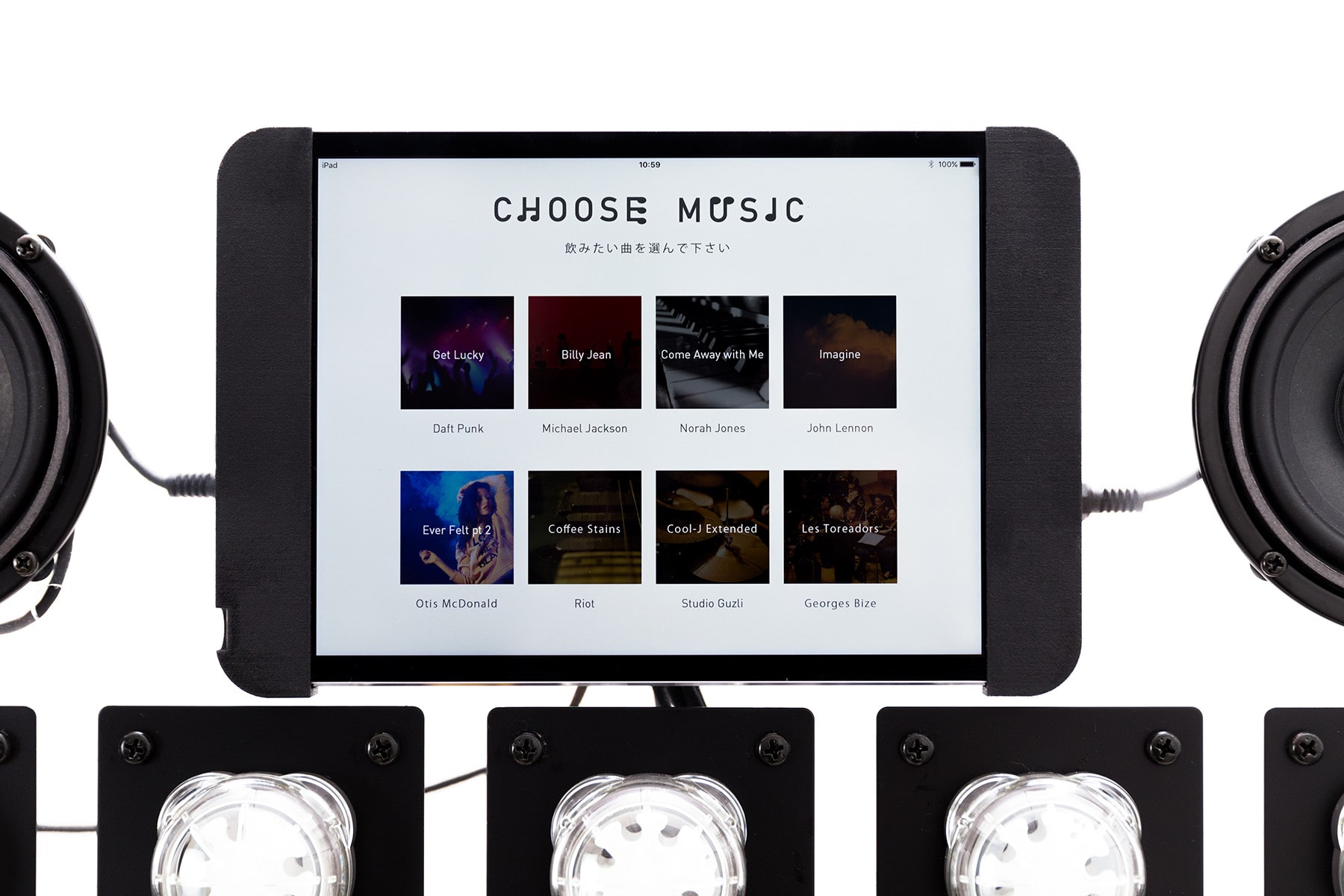

First, the user selects the music on the SQUEEZE MUSIC tablet interface. Then the machine analyzes the song and the mood according to 5 feelings. These feeling are converted to tastes: happy becomes sweet, exciting becomes sour, romantic becomes astringent, sentimental becomes salty and sad becomes bitter. Based on this analysis, SQUEEZE MUSIC creates a blended juice. Now it’s time to enjoy your juice!

Connecting software and hardware

To make the software, we used php, JavaScript, Swift & Gracenote API. For the hardware components we used pumps, motors and different materials that we found in material shops of Akihabara. Controlling the pumps and motors’ timing to the music was one of the biggest challenges. During the prototyping stage, we used an optical sensor to get signals from the software through and iPad. We experimented with the different materials until we got the perfect timing.

Appeared in domestic and international media · awards

This project won the GOOD DESIGN AWARD in 2017, and it was our first time to get the award. SQUEEZE MUSIC has been featured and covered by over 400 media in 2 years, including Web media, TV show, and the Radio program. SQUEEZE MUSIC has been exhibited both in Japan and overseas. The team joined “GOOD DESIGN AWARD EXHIBITION 2017”, “INNOVATION WORLD FESTA 2018” in Japan, “GOLDEN MELODY AWARD 2018” in Taiwan. The showcase makes excited and audiences and the new experience delivered to the many people.

CREDITS

- CLIENT

- Internal Project

- ROLE

- Concept, Engineering

- THE TEAM

-

Project Leader

Akinori Goto (NOMLAB)Planner

Naoya Kudo (Dentsu)Planner

Yuma Shingai (Dentsu)Art Director

Hami Matsunaga (Dentsu)Technical Director & Engineer

Shun Okadamonopo TokyoPR

Midori Sugamamonopo TokyoPR

Keita Niiromonopo Tokyo